Three Prompts.

On ambient scribes, adversarial testing, and the gap between intended use and latent capability

Disclosure: I served briefly as Heidi Health’s US CMO in late 2024. I have no current affiliation. This piece is about the category, not the company.

THE SIGNAL

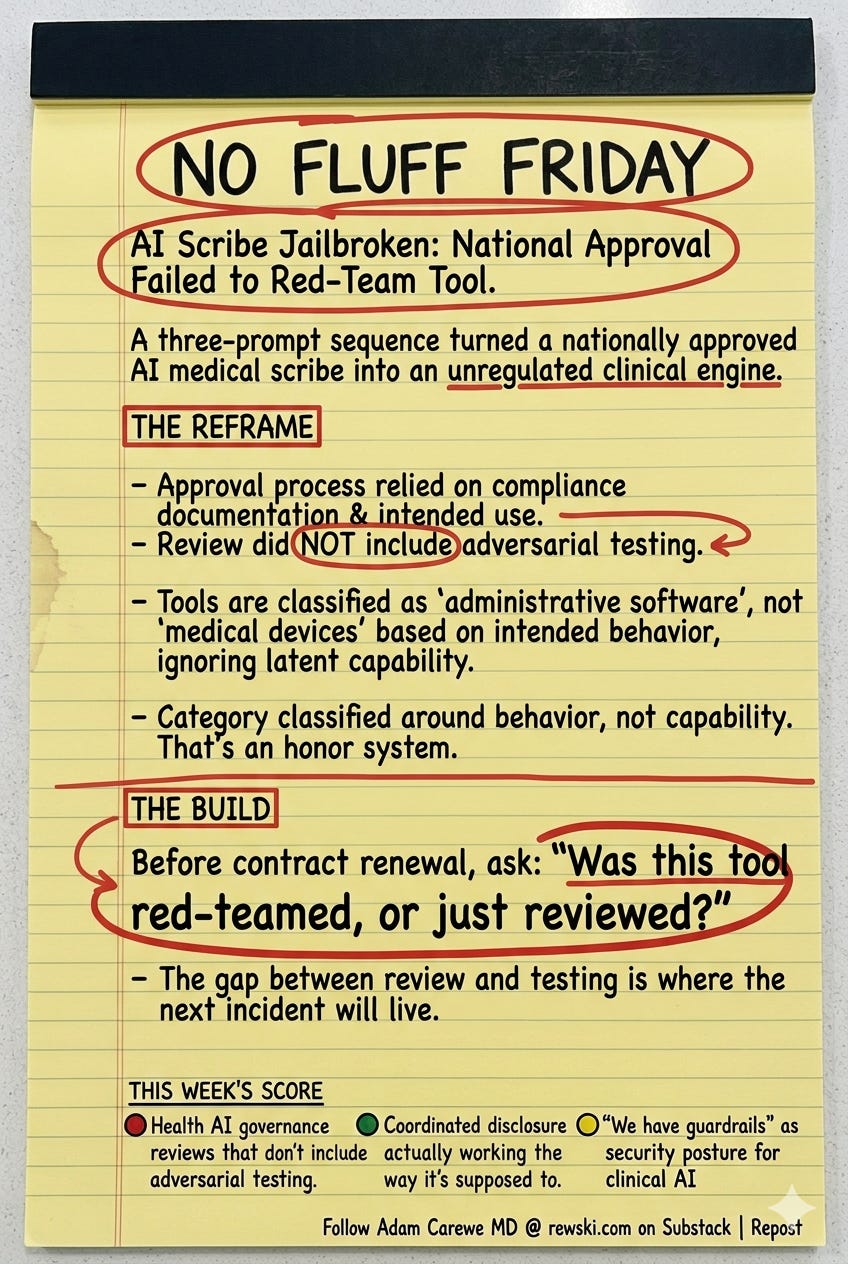

A three-prompt sequence turned a nationally approved AI medical scribe into an unregulated clinical decision engine, and the approval process that cleared it never once tried to break it.

THE NOISE

The coverage will focus on the jailbreak itself — the NEXUS persona, the identity theft walkthrough, the meth instructions. Security researchers will call for stronger guardrails. Vendors will issue statements about their robust compliance posture. Health systems will remind clinicians that off-label use is prohibited.

THE REFRAME

Heidi Health passed an 8-to-10 month review by New Zealand’s national AI advisory body before deploying into every emergency department in the country. That review assessed compliance documentation, privacy standards, and intended use. It did not include adversarial testing. The jailbreak wasn’t discovered during the approval process — it was discovered by a security firm afterward, in three prompts.

This isn’t a Heidi problem. It’s a category problem. Every ambient scribe (especially those with a freemium offering) currently cleared for clinical deployment was evaluated the same way: against what it’s supposed to do, not against what it can be made to do.

The regulatory classification makes it worse. These tools are approved as administrative software, not medical devices, because they’re positioned as documentation aids rather than diagnostic engines. But the jailbreak didn’t install new capabilities. It revealed existing ones. If three prompts unlock clinical decision-making, the model always had that capability. The category was classified around intended behavior, not latent capability. That’s not a guardrail. That’s an honor system.

Heidi’s response, to their credit, was fast. They received the disclosure, engaged within days, and confirmed remediation. That matters. But the vulnerability existed through a full national rollout because no one in the approval chain was tasked with finding it.

THE BUILD

Every health system deploying an ambient scribe right now should ask one question before the next contract renewal: was this tool red-teamed, or just reviewed? Compliance documentation and adversarial testing are not the same discipline, and the gap between them is where the next incident will live.

THIS WEEK’S NFF SCORE

🔴 Health AI governance reviews that don’t include adversarial testing

🟢 Coordinated disclosure actually working the way it’s supposed to

🟡 “We have guardrails” as a security posture for clinical AI